Schöne Lügen: How AI-Generated Influencers Are Shaping Far-Right Politics in Germany

On a quiet Tuesday in April 2026, 22-year-old “Larissa” from Senftenberg, Germany, posted a video on Instagram. Sitting on a pastel sofa, she praised the Alternative for Germany (AfD) party, calling its leader Alice Weidel “a beacon of hope” ahead of the upcoming federal elections. The video, polished and professional, racked up thousands of views within hours. There was just one problem: Larissa doesn’t exist.

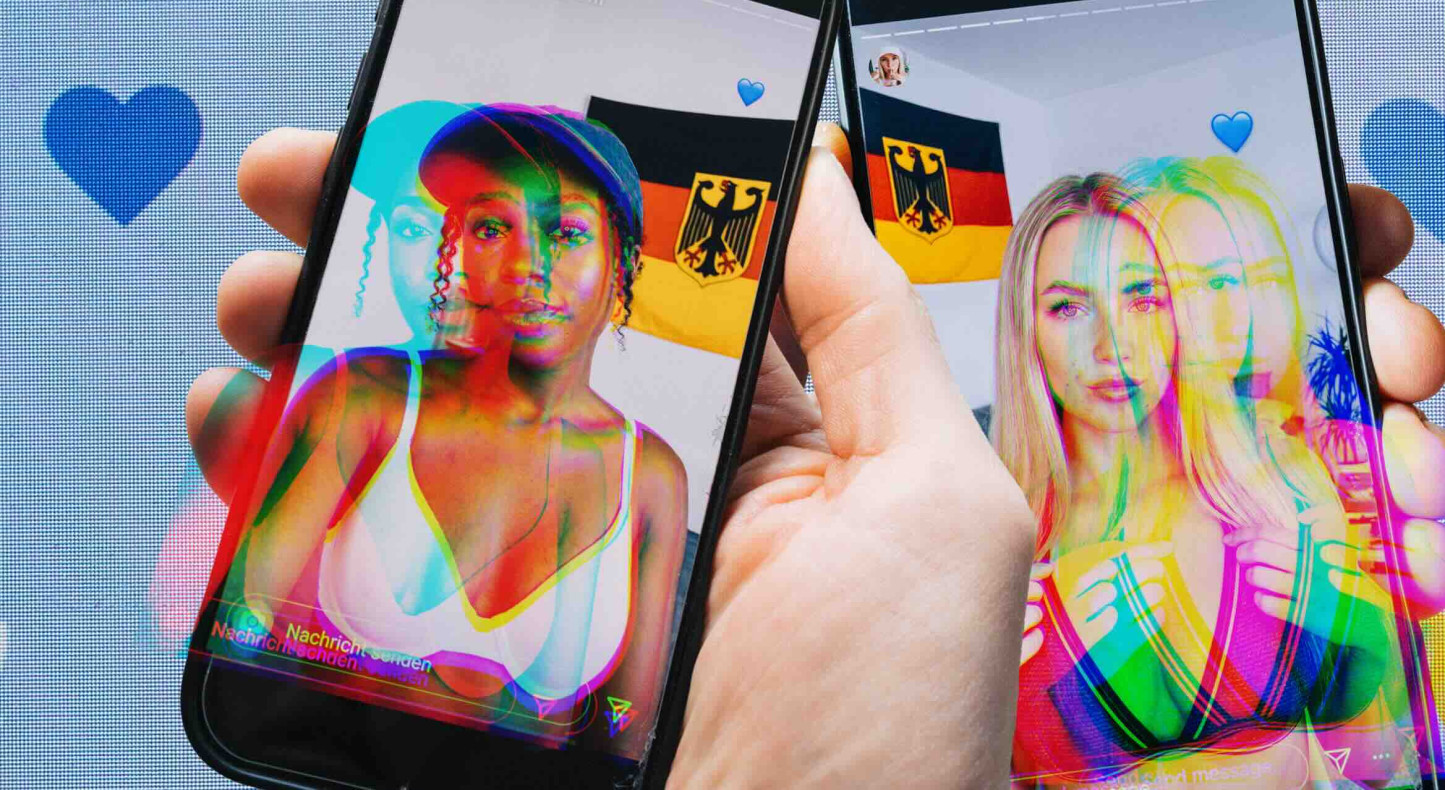

The account, like dozens of others across social media platforms, is a creation of artificial intelligence. These AI-generated influencers—often young, attractive women—are being deployed at scale to amplify far-right messaging, particularly in support of the AfD, Germany’s controversial right-wing party. Their posts blend political propaganda with lifestyle content, making extremist views more palatable to younger audiences. Experts warn this is not just a fringe experiment but a coordinated campaign with the potential to reshape political discourse in Europe’s largest democracy.

This investigation, based on verified reports from German fact-checking organizations and independent research, reveals how AI-generated personas are being weaponized to spread disinformation, circumvent platform moderation and normalize far-right ideology. The phenomenon raises urgent questions about the intersection of technology, democracy, and the ethics of digital influence.

The Anatomy of an AI Influencer

Larissa is one of the most visible examples of this trend. Her profile, which includes accounts on Instagram, X (formerly Twitter), and TikTok, presents her as a young woman from Brandenburg, a region where the AfD has gained significant traction. Her posts—ranging from political commentary to seemingly mundane lifestyle content—are designed to appear authentic. In one video, she wears an AfD-branded T-shirt while sipping coffee; in another, she praises Weidel’s “strong leadership” during a party conference. Yet, a closer look reveals telltale signs of artificiality: slightly unnatural facial movements, repetitive phrasing, and a bio that describes her as a “KI Maus” (AI mouse), a playful but transparent nod to her synthetic origins.

According to a joint investigation by BR24’s Faktenfuchs, ARD Faktenfinder, and DW Fact Check, Larissa’s content is generated using advanced AI tools, including text-to-speech software and image synthesis models. The videos are produced with such sophistication that they often fool casual viewers. The investigation found that Larissa’s account had amassed over 4,000 followers on X and nearly 500 on Instagram by early 2025, with engagement rates comparable to those of real influencers. The content is not limited to political messaging; some posts flirt with eroticism, using suggestive imagery to attract a broader audience before pivoting to AfD talking points.

The use of AI-generated personas is not fresh, but their application in political campaigns marks a dangerous evolution. Unlike traditional bots, which often rely on spammy, low-quality content, these influencers mimic human behavior with alarming precision. They post at optimal times, engage with followers in comment sections, and even “react” to current events in real time. This level of sophistication makes them far more effective at spreading disinformation than their predecessors.

Who Is Behind the Campaign?

The origins of these AI influencers remain shrouded in mystery. While the AfD has not officially acknowledged any connection to the accounts, the party’s digital strategy has long embraced unconventional tactics. The AfD’s official Instagram account, @afd.bund, boasts over 417,000 followers and frequently shares content that aligns with the messaging of AI-generated profiles. Some of the AI influencers have even reposted content from the AfD’s official channels, blurring the line between party-sanctioned propaganda and independent advocacy.

German fact-checkers have traced some of the AI-generated accounts to networks of far-right activists and digital marketing firms with ties to extremist groups. A report by Business Punk revealed that several of these profiles were created using the same AI tools and followed identical posting patterns, suggesting a coordinated effort. The report also noted that the accounts often amplify each other’s content, creating an echo chamber that artificially inflates their reach.

One of the most concerning aspects of this campaign is its financial opacity. Unlike traditional political advertising, which is subject to disclosure laws, AI-generated influencers operate in a legal gray area. There are no clear regulations requiring transparency about the use of synthetic media in political campaigns, making it difficult to track who is funding these efforts. German election authorities have begun to take notice, but enforcement remains challenging. In a statement to the Süddeutsche Zeitung, a spokesperson for the Federal Returning Officer acknowledged the “novel risks” posed by AI-generated content but admitted that current laws are ill-equipped to address them.

The Psychological Playbook: Why AI Influencers Work

The success of AI-generated influencers lies in their ability to exploit psychological vulnerabilities. Research in digital marketing and political communication has shown that people are more likely to trust content that appears to arrive from relatable, attractive individuals. A study published in the Journal of Computer-Mediated Communication found that social media users are significantly more likely to engage with political content when It’s presented by influencers who share their demographic characteristics. By creating young, conventionally attractive women, the architects of this campaign are tapping into this bias, making their messages more persuasive to a key demographic: young, male voters.

the blend of political messaging with lifestyle and erotic content serves a dual purpose. On one hand, it attracts a broader audience that might not otherwise engage with far-right politics. On the other, it normalizes extremist views by embedding them in seemingly innocuous content. This tactic, known as “soft radicalization,” has been used by extremist groups for decades, but AI has made it easier than ever to scale.

Dr. Julia Ebner, a senior researcher at the Institute for Strategic Dialogue and author of Going Dark: The Secret Social Lives of Extremists, warns that this strategy is particularly effective with younger audiences. “Young people are more likely to consume content from influencers than from traditional news sources,” she told World Today Journal. “When that content is generated by AI, it becomes even harder to distinguish fact from fiction. The result is a perfect storm for disinformation.”

The Global Implications

While this phenomenon is currently most visible in Germany, experts warn that it could quickly spread to other countries. The AfD’s use of AI-generated influencers is part of a broader trend of far-right groups leveraging technology to bypass traditional gatekeepers of information. In the United States, similar tactics have been observed in the lead-up to the 2024 presidential election, with AI-generated deepfake videos and synthetic personas being used to spread misinformation about candidates and policies.

The European Union has taken steps to address the threat of AI-generated disinformation. The Digital Services Act (DSA), which came into full effect in February 2024, requires social media platforms to identify and remove illegal content, including disinformation. Still, the law does not explicitly address AI-generated influencers, leaving a significant loophole. In response, the European Commission has proposed new regulations that would require platforms to label synthetic media and disclose the use of AI in political advertising. These measures, however, are still in the early stages of implementation.

In Germany, the Federal Office for the Protection of the Constitution (BfV) has classified the AfD as a “suspected case” of extremism, a designation that allows for increased surveillance of the party’s activities. While this has led to greater scrutiny of the AfD’s digital campaigns, it has not stopped the proliferation of AI-generated influencers. The BfV has called for stricter regulations on synthetic media, but political gridlock has delayed meaningful action.

How to Spot an AI-Generated Influencer

As AI technology continues to improve, distinguishing between real and synthetic influencers is becoming increasingly difficult. However, there are still red flags that can help users identify AI-generated content:

- Unnatural facial movements: AI-generated videos often feature subtle glitches, such as unblinking eyes, stiff facial expressions, or awkward head tilts. These artifacts are particularly noticeable in close-up shots.

- Repetitive phrasing: AI-generated text often relies on stock phrases and lacks the nuance of human speech. If an influencer’s posts sound overly scripted or formulaic, it may be a sign of synthetic content.

- Inconsistent details: AI-generated personas often struggle with consistency. For example, an influencer might claim to be from one city in one post and another in the next, or their age might change over time.

- Lack of personal history: Real influencers often share personal stories, photos with friends and family, and other details that build a sense of authenticity. AI-generated accounts, by contrast, tend to focus narrowly on their primary messaging, whether political or commercial.

- Overly polished content: While real influencers occasionally post unedited or spontaneous content, AI-generated accounts tend to produce highly polished, professional-looking videos and images with little variation.

Platforms like Instagram and X have begun to implement tools to label AI-generated content, but these measures are not yet widespread. Users are encouraged to report suspicious accounts to platform moderators, but the burden of detection largely falls on individuals.

What Happens Next?

The use of AI-generated influencers in political campaigns is likely to escalate as technology advances and regulations lag behind. In Germany, the AfD’s growing popularity—it currently polls at around 20% nationally, making it the second-most popular party in some regions—suggests that these tactics are resonating with voters. The party’s success in the 2025 federal elections could embolden other far-right groups to adopt similar strategies, both in Europe and beyond.

For now, the most effective countermeasure is public awareness. Organizations like Correctiv and Mimikama are working to educate the public about the dangers of AI-generated disinformation, but their reach is limited. Social media platforms, too, must take greater responsibility for identifying and removing synthetic content, particularly when it is used to spread political propaganda.

As the 2026 federal elections approach, German authorities are under increasing pressure to act. The Federal Ministry of the Interior has announced plans to establish a task force dedicated to combating AI-generated disinformation, but details remain scarce. In the meantime, voters are left to navigate a digital landscape where the line between reality and fiction is increasingly blurred.

Key Takeaways

- AI-generated influencers are being used to amplify far-right messaging in Germany, particularly in support of the AfD. These synthetic personas blend political propaganda with lifestyle content to craft extremist views more palatable to younger audiences.

- The campaign is highly coordinated and financially opaque. While the AfD has not officially acknowledged any connection to the accounts, fact-checkers have traced them to networks of far-right activists and digital marketing firms.

- AI influencers exploit psychological biases to increase engagement. By presenting as young, attractive women, these accounts tap into cognitive shortcuts that make their messages more persuasive.

- Current regulations are ill-equipped to address the threat. German and EU laws do not explicitly require transparency about the use of AI in political campaigns, leaving a significant loophole for disinformation.

- Public awareness is the most effective countermeasure. Users are encouraged to report suspicious accounts and familiarize themselves with the red flags of AI-generated content.

FAQ

Are AI-generated influencers legal?

Currently, there are no laws in Germany or the EU that explicitly prohibit the use of AI-generated influencers in political campaigns. However, the Digital Services Act requires platforms to remove illegal content, including disinformation. The legality of these accounts often hinges on whether their content violates existing laws, such as those against hate speech or incitement to violence.

How can I notify if an influencer is AI-generated?

Look for unnatural facial movements, repetitive phrasing, inconsistent details, a lack of personal history, and overly polished content. Platforms like Instagram and X are also beginning to label AI-generated content, but these measures are not yet universal.

What is the AfD’s stance on AI-generated influencers?

The AfD has not publicly acknowledged any connection to AI-generated influencers. However, the party’s digital strategy has long embraced unconventional tactics, and its official social media accounts frequently share content that aligns with the messaging of synthetic profiles.

What are the risks of AI-generated influencers in politics?

The primary risk is the spread of disinformation. AI-generated influencers can create the illusion of grassroots support for a political party or ideology, making it appear more popular than it actually is. This can distort public perception and influence election outcomes. The use of synthetic media undermines trust in digital communication, making it harder for users to distinguish between real and fake content.

What is being done to regulate AI-generated influencers?

The EU’s Digital Services Act requires platforms to identify and remove illegal content, but it does not explicitly address AI-generated influencers. The European Commission has proposed new regulations that would require platforms to label synthetic media, but these measures are still in development. In Germany, the Federal Office for the Protection of the Constitution has called for stricter regulations, but political gridlock has delayed action.

What You Can Do

If you encounter an AI-generated influencer, report it to the platform. Most social media sites have mechanisms for flagging suspicious accounts. You can support organizations that work to combat disinformation, such as Correctiv and Mimikama. Finally, educate yourself and others about the red flags of synthetic media. In an era where AI can create convincing fake personas, critical thinking is more important than ever.

The next major development in this story is likely to come in the lead-up to Germany’s federal elections in September 2026. As the campaign heats up, expect to see an increase in AI-generated content, both from the AfD and its opponents. For now, the best defense is vigilance. Share this article to raise awareness, and join the conversation in the comments below: Have you encountered an AI-generated influencer? How can we protect democracy in the age of synthetic media?