OpenAI has launched a new, free version of its AI assistant tailored for verified healthcare professionals in the United States. Called ChatGPT for Clinicians, the tool is designed to support physicians, nurse practitioners, physician assistants, and pharmacists with clinical documentation, medical research, and care coordination tasks. The platform operates within a secure workspace and uses a specialized version of the GPT-5.4 language model.

According to OpenAI, the clinician-focused version of GPT-5.4 has demonstrated strong performance on a new benchmark called HealthBench Professional, which evaluates AI systems on real-world clinical tasks such as documentation, medical reasoning, and patient communication. In evaluations, this version of the model outperformed both the base GPT-5.4 and other leading AI systems, including those developed by competing organizations.

Notably, OpenAI reports that the clinician-tuned GPT-5.4 model as well scored higher than human physicians who were given unlimited time and internet access to complete the same tasks in the HealthBench Professional assessment. The model achieved a top score of 59.0 on the benchmark, a result the company says reflects its enhanced ability to handle complex clinical workflows with accuracy and efficiency.

The development of ChatGPT for Clinicians involved extensive collaboration with hundreds of practicing physicians. These medical advisors reviewed more than 700,000 model responses during testing to help refine the AI’s reasoning, accuracy, and safety. Their feedback was used to train the system to better support clinical decision-making while reducing the administrative burden on healthcare workers.

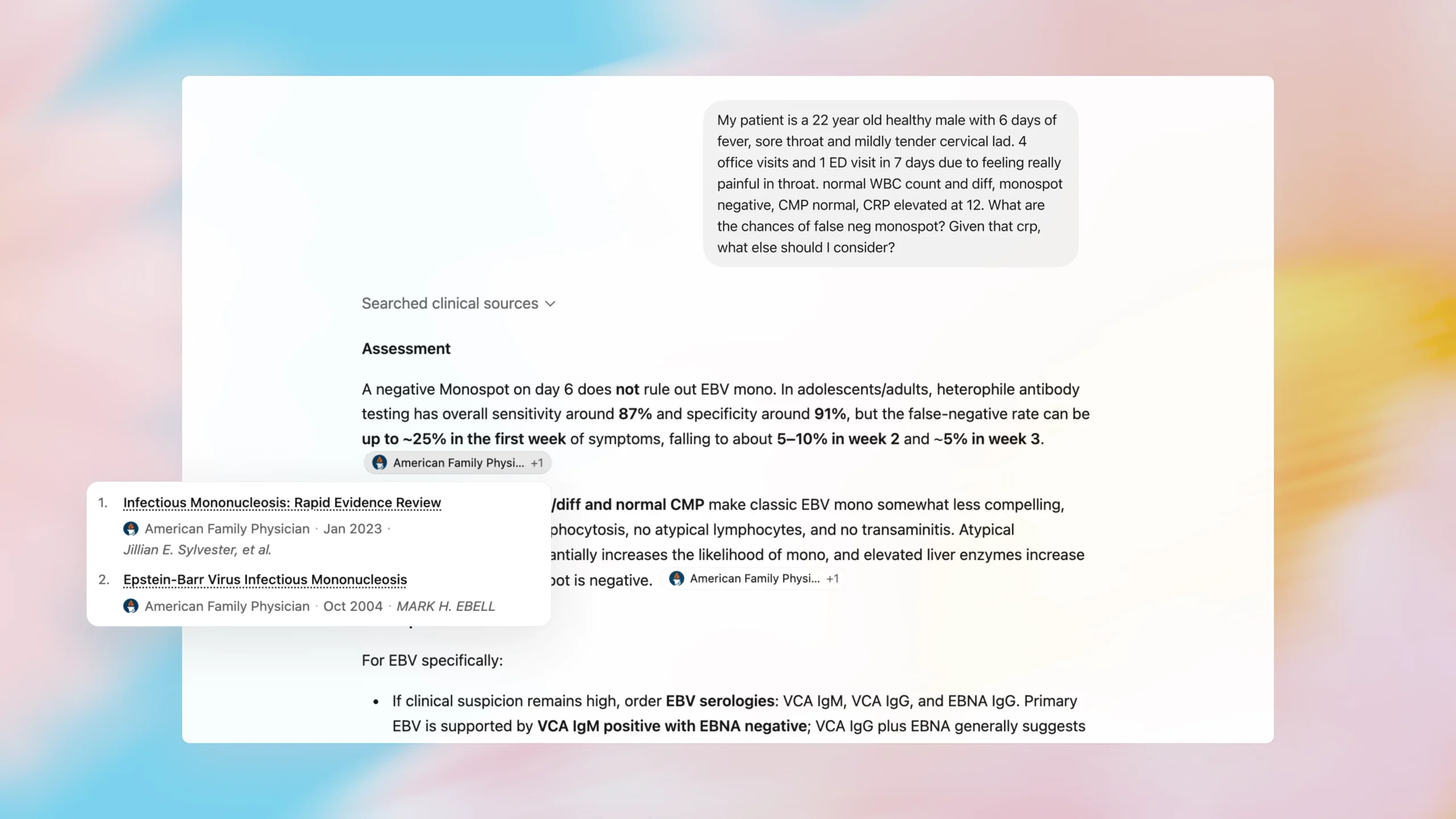

One of the key features of the platform is its ability to provide real-time, citable information from peer-reviewed medical sources. When answering clinical questions, the tool is designed to include references to current research, helping users verify the basis of its responses. This feature aims to support evidence-based practice and reduce the time clinicians spend manually searching literature.

Another functionality allows users to create customizable “skills” — reusable workflows that automate repetitive tasks. For example, a clinician could train the tool to follow their hospital’s specific template for writing referral letters or discharge instructions. Once configured, the skill can be applied consistently across similar tasks, promoting standardization and saving time.

The platform also includes automated tracking for continuing medical education (CME) credits. When clinicians use the tool to review medical literature or complete educational activities, the system can log these interactions and help users document their progress toward meeting licensure requirements. This integration aims to simplify a process that many providers find tedious and time-consuming.

Privacy and data security are central to the design of ChatGPT for Clinicians. By default, conversations within the clinician workspace are not used to train OpenAI’s general AI models. This “no-training” policy means that patient-specific information or clinical notes entered into the system are not retained for model improvement, addressing a key concern about data reuse in healthcare AI.

For healthcare organizations that handle protected health information (PHI), the platform offers the option to enter into a Business Associate Agreement (BAA) with OpenAI. This contractual arrangement allows the tool to be used in compliance with the Health Insurance Portability and Accountability Act (HIPAA), which sets national standards for the protection of sensitive patient data in the U.S.

Access to the platform requires multi-factor authentication, adding a layer of security to prevent unauthorized entry. OpenAI states that these safeguards are intended to align with the strict regulatory expectations of U.S. Healthcare institutions, where data breaches and privacy violations carry significant legal and financial risks.

Before release, the system underwent rigorous safety testing involving clinical experts. Physician advisors evaluated nearly 7,000 sample conversations and rated 99.6% of the model’s responses as safe and accurate. In additional testing, the AI was found to cite correct medical sources more frequently than human physicians in certain scenarios where factual accuracy was critical.

Despite these strong results, OpenAI emphasizes that the tool is intended to assist, not replace, clinical judgment. The company states that ChatGPT for Clinicians should be used as a support resource — helping with information gathering, drafting, and organization — while final decisions about patient care remain the responsibility of the licensed healthcare provider.

The launch reflects broader trends in healthcare technology adoption. Recent data from the American Medical Association shows that 72% of physicians now use some form of artificial intelligence in their practice, up from 48% the previous year. This growth highlights increasing openness to AI-assisted tools, particularly those that reduce administrative workload and improve access to medical knowledge.

By offering the tool at no cost to verified professionals, OpenAI aims to lower barriers to entry and encourage widespread testing and feedback. The company says it views the launch as an early step in a longer process of refining AI for clinical use, with ongoing input from frontline providers shaping future updates.

As healthcare systems continue to grapple with clinician burnout and rising demands for documentation and compliance, tools like ChatGPT for Clinicians represent one approach to alleviating cognitive load. Whether such technologies will be widely adopted depends on factors including ease of integration, perceived usefulness, and ongoing validation of safety and effectiveness in real-world settings.

For now, the platform is available to eligible healthcare workers in the United States who complete a verification process to confirm their professional status. Interested users can access the service through the official ChatGPT for Clinicians webpage, where they can review eligibility requirements and begin the registration process.

Moving forward, the real-world impact of the tool will depend on how it is implemented across different clinical environments. Hospitals, clinics, and individual providers will require to assess whether the technology fits their workflows, meets their security standards, and demonstrably improves efficiency without compromising care quality.

As with any emerging technology in healthcare, responsible adoption will require ongoing evaluation, clear guidelines, and attention to both the benefits and limitations of AI-assisted practice. Clinicians and administrators alike will play a key role in shaping how tools like this are used — ensuring they serve as aids to, rather than substitutes for, expert medical judgment.