The shift from experimental artificial intelligence to scalable, revenue-generating implementation is now visible in the hard numbers of the cloud industry. For enterprises, the challenge has moved beyond simply accessing large language models to solving the “data gravity” problem—how to move, manage, and analyze massive datasets in real time without the crushing overhead of traditional data pipelines.

Recent performance metrics reveal that Microsoft Cosmos DB and Fabric growth are being driven heavily by this transition. As organizations integrate AI into their core operations, the demand for high-performance, AI-optimized databases has surged. Specifically, the Cosmos DB business grew 50% year-over-year, while paid customers for Microsoft Fabric increased by 60% according to recent financial analysis.

This growth is not merely a result of increased cloud spending, but a fundamental change in how data is architecturalized. By uniting operational and analytical data into a single platform, Microsoft is attempting to remove the friction that typically slows down AI deployment. The synergy between these two services is already evident, with over 15,000 customers utilizing both Foundry and Fabric, a segment that has grown by 60%.

The Convergence of AI and Data Infrastructure

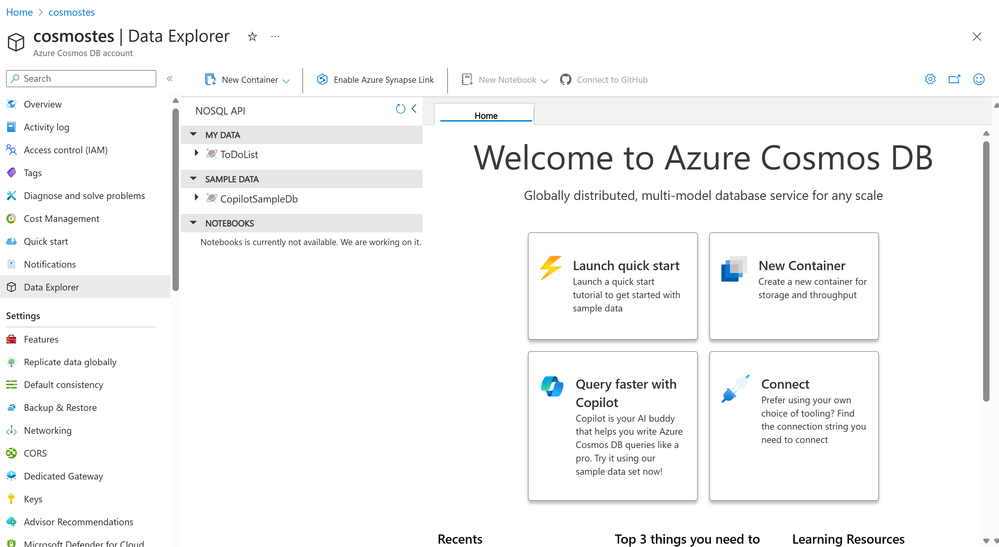

At the heart of this expansion is Azure Cosmos DB, a distributed NoSQL database designed for low latency and high availability. The 50% year-over-year growth in this segment highlights a critical trend: AI applications are only as effective as the data feeding them. Whether It’s powering a recommendation engine or a real-time fraud detection system, the ability to handle semi-structured or unstructured data at a global scale is now a prerequisite for enterprise AI.

Complementing this is Microsoft Fabric, an all-in-one analytics solution that simplifies the data estate. The rise in paid Fabric customers—now totaling 35,000—suggests that companies are moving away from fragmented data silos in favor of a unified environment. This shift allows businesses to consolidate their data engineering, data science, and real-time analytics into a single cohesive workflow.

Eliminating the ETL Bottleneck with General Availability

One of the most significant technical hurdles in data management is the ETL process—Extract, Transform, and Load. Traditionally, moving data from an operational database (where transactions happen) to an analytical warehouse (where insights are gathered) required complex, costly, and time-consuming pipelines that often resulted in “stale” data by the time it reached the analyst.

Microsoft has addressed this with the general availability of Cosmos DB in Microsoft Fabric and Cosmos DB Mirroring. This release allows organizations to analyze live Cosmos DB data directly within Fabric without the need for complex ETL. By keeping data in sync within OneLake—Microsoft’s unified data lake—the platform provides a single source of truth for both historical and real-time insights.

The practical impact of this “zero ETL” approach is substantial. Users can now write queries using T-SQL from a SQL Endpoint or utilize Python and Spark Notebooks within Fabric to interact with fresh operational data. This capability is essential for building AI-ready insights, where the delay between a customer action and an AI-driven response must be minimized.

“Cosmos DB in Fabric has been a home run for Kinectify. As a company, we store a lot of data in Cosmos DB, and being able to automatically, reliably and performantly move data with zero ETL into Fabric has allowed us to experiment with Fabric in ways that we simply would not have been able to if we had to setup the ETL ourselves.”

Powering AI with Vector Search and DiskANN

To make data truly “AI-ready,” it must be searchable in a way that machines understand. This is where vector indexing comes into play. Traditional databases search for exact matches (like a specific ID number), but AI requires vector search, which looks for “semantic similarity”—finding data points that are conceptually related even if they don’t share the same keywords.

Cosmos DB in Microsoft Fabric is the first database in Azure to offer DiskANN, a graph-based indexing and search system. DiskANN allows the system to index, store, and search large sets of vector data while utilizing relatively small amounts of computational resources. This is a critical efficiency gain for companies training machine learning (ML) models or building Retrieval-Augmented Generation (RAG) systems, where the AI retrieves specific documents from a private database to provide more accurate, grounded answers.

By integrating DiskANN, Microsoft is enabling a faster pipeline from raw operational data to AI-driven action. This architecture allows developers to build dashboards and train ML models on fresh data, reducing the latency that previously hindered the deployment of real-time AI agents.

The Broader Azure Ecosystem and AI Monetization

The growth of Cosmos DB and Fabric does not happen in a vacuum; it is part of a larger upward trajectory for Microsoft’s cloud and AI ecosystem. Azure has seen a 40% year-over-year growth rate, supported by a massive $627 billion contracted backlog as noted in recent financial reporting. This indicates a strong long-term commitment from enterprise clients to migrate their workloads to the cloud.

the monetization of AI is accelerating through the adoption of M365 Copilot. The number of Copilot seats has increased by 250% year-over-year, and commercial remaining performance obligations (RPO) have risen by 99%. This suggests that the “AI hype” is converting into actual subscription revenue and long-term contracts.

From a strategic perspective, these developments create a virtuous cycle: as more companies adopt Copilot and other AI tools, they realize the need for better data infrastructure, which drives growth in Azure, Cosmos DB, and Fabric. This integrated approach—combining the productivity layer (Copilot), the platform layer (Azure), and the data layer (Fabric/Cosmos DB)—positions Microsoft to capture a significant share of the AI transformation spend.

Key Technical Takeaways

- Zero ETL: The general availability of Cosmos DB Mirroring removes the need for costly data pipelines, allowing real-time analysis of operational data.

- OneLake Integration: Data stays synchronized in a single location, providing a unified source of truth for AI and analytics.

- Vector Search: The introduction of DiskANN enables efficient, high-scale semantic search, which is foundational for modern AI/ML workloads.

- Rapid Adoption: 60% growth in Fabric paid customers and 50% growth in Cosmos DB signal an enterprise-wide shift toward AI-optimized data architectures.

As the industry moves toward more autonomous AI agents and complex RAG architectures, the ability to handle data with low latency and high semantic accuracy will remain the primary competitive advantage for cloud hyperscalers. Microsoft’s current trajectory suggests a concerted effort to own the entire data lifecycle, from the moment a piece of information is created in a NoSQL database to the moment it is used to generate an AI insight.

The next major checkpoint for the industry will be the upcoming quarterly earnings reports, which will provide further clarity on how CapEx investments in AI infrastructure are translating into sustained operating income growth.

Do you believe the move toward “zero ETL” will fundamentally change how your organization handles data? Share your thoughts in the comments below or share this analysis with your network.