For millions of people worldwide, the first point of contact for a health concern is no longer a clinic or a pharmacy, but a smartphone. The rise of generative artificial intelligence has fundamentally changed how we seek medical information, with many turning to chatbots to interpret symptoms, decode complex medical reports, or decide whether a trip to the emergency room is necessary.

As a physician and journalist, I have watched this shift with a mixture of fascination and caution. The appeal is obvious: immediate, natural-language explanations that can simplify daunting technical jargon in seconds. However, the core question remains: will AI replace doctors? Even as the technology offers unprecedented accessibility, current evidence suggests that the gap between a statistical probability and a clinical diagnosis is a dangerous divide.

The convenience of these tools often masks a critical limitation. While an AI can synthesize vast amounts of data, it does not “understand” medicine in the way a human practitioner does. Instead, it operates on a calculation of word probabilities, which can lead to a phenomenon where the system appears authoritative and confident even when it is delivering incorrect scientific information.

Recent data underscores the scale of this trend. According to estimates from the European Commission, more than 70% of people aged 16 to 74 in Europe search for health information online at least once a year, with an increasing number opting for virtual assistants over traditional search engines AIRC.

The Illusion of Accuracy: Probability vs. Medical Reasoning

The primary danger of relying on AI for medical guidance is the “illusion of competence.” A study conducted by Washington State University revealed that AI models, such as ChatGPT, often present information with high levels of confidence, regardless of its accuracy. While initial tests might suggest a superficial accuracy rate of approximately 80%, researchers found that once the data was adjusted for the probability of guessing randomly, the actual reasoning capability of the AI dropped significantly Sanità Informazione.

This discrepancy exists because large language models (LLMs) are not trained to perform medical diagnostics; they are trained to predict the next most likely word in a sequence. When a patient asks about a symptom, the AI is not analyzing the biological state of the patient; it is calculating the most probable linguistic response based on its training data. This means a response can be structured impeccably and sound professional while lacking any real medical foundation.

The risk is particularly acute when dealing with “false myths” or medical misinformation. The Washington State University research highlighted a profound weakness in the ability of AI to identify false claims. While the systems are relatively effective at confirming true facts, they were only able to correctly identify false hypotheses in 16.4% of cases Sanità Informazione.

The Danger of Inconsistency in Clinical Decisions

In medicine, consistency is a prerequisite for safety. A doctor’s advice on whether a specific medication is safe to combine with another must be based on pharmacological evidence, not a coin flip. However, AI models have demonstrated a troubling lack of consistency. In the aforementioned study, when the same scientific question was posed ten consecutive times, the AI frequently provided contradictory answers, alternating between “true” and “false” without any apparent logic Sanità Informazione.

For a user attempting to manage a chronic condition or evaluate an acute symptom, this variability is perilous. If a chatbot confirms a drug combination is safe in one session and warns of danger in the next, it proves that the tool cannot serve as a reliable source for critical medical decisions. The “correctness” of the answer depends more on the moment the question is asked than on medical truth.

This instability is precisely why the human element of medicine—clinical judgment—remains irreplaceable. A physician does not just process data; they integrate a patient’s physical exam, medical history and nuanced behavioral cues to reach a diagnosis. AI, currently, lacks the ability to manage the inherent uncertainty of an initial medical encounter where information is scarce or ambiguous.

The Shift Toward AI-Integrated Health Services

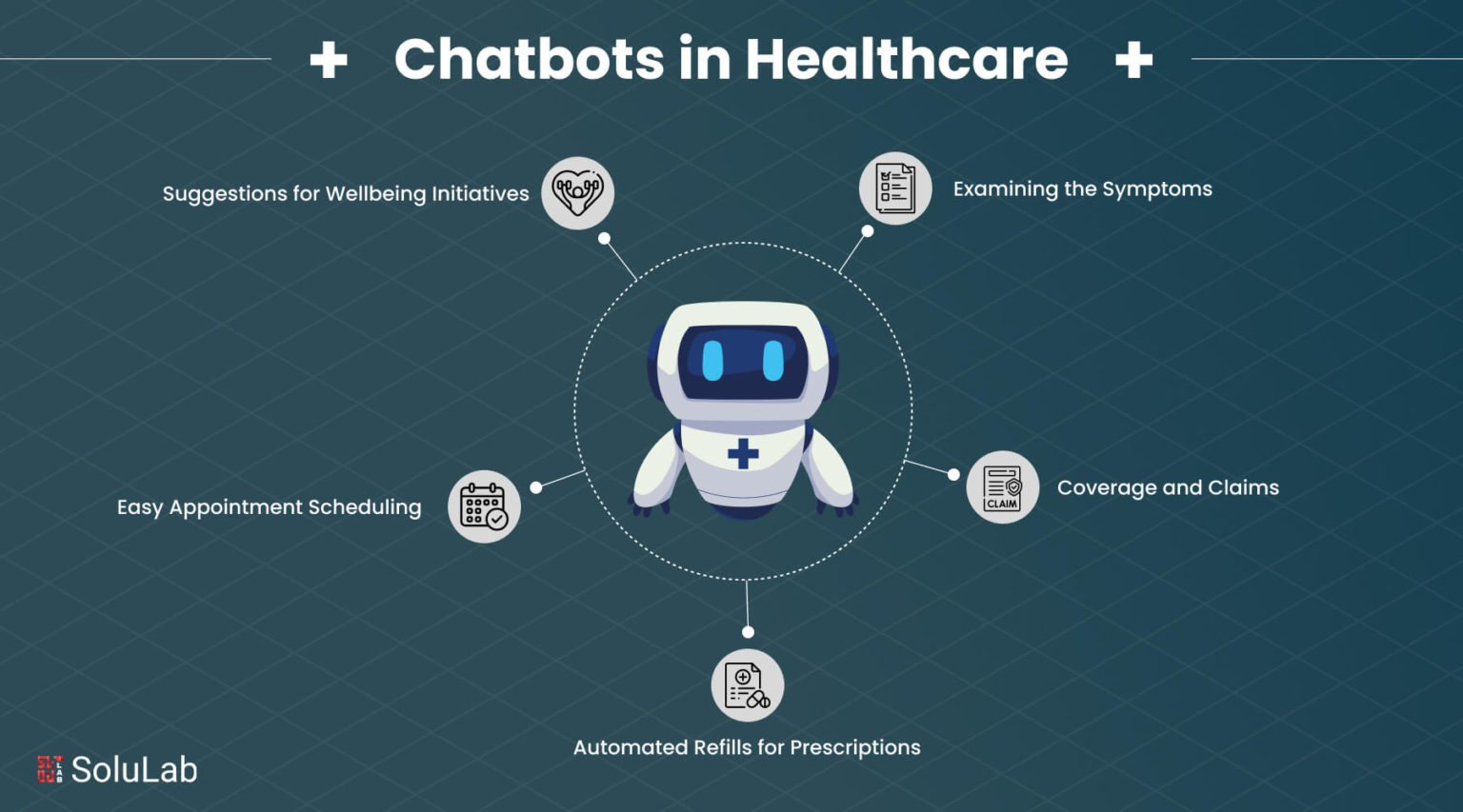

Despite these risks, the integration of AI into healthcare is accelerating. Here’s driven by two primary factors: the immediate availability of answers and the systemic difficulty of accessing healthcare services in many countries Corriere della Sera.

We are seeing a transition from general-purpose chatbots to specialized health experiences. For example, OpenAI is introducing “ChatGPT Salute,” a dedicated experience designed to integrate health information more securely to help users feel more informed and prepared in managing their health Corriere della Sera.

While these specialized tools aim to improve safety and accuracy, they still operate within the constraints of LLM technology. The danger remains that users may mistake a more “polished” health interface for a licensed medical professional. The ability of AI to adapt scientific concepts to different levels of understanding is a powerful educational tool, but it is not a substitute for a diagnostic process.

Key Takeaways for Patients and Users

- Confidence $\neq$ Accuracy: Just because a chatbot sounds authoritative does not mean the information is medically correct.

- Check for Consistency: AI can deliver different answers to the same question in different sessions; never rely on a single AI response for medication or dosage.

- The 16.4% Gap: AI struggles significantly to identify medical myths and false claims, making it an unreliable tool for debunking misinformation.

- Complement, Don’t Replace: Use AI to understand technical terms or prepare questions for your doctor, but never to replace a clinical consultation.

Conclusion: The Future of the Doctor-Patient Relationship

The question of whether technology will replace the doctor is better framed as: how will technology change the doctor’s role? AI can handle the “administrative” side of information—summarizing reports or explaining a term—but it cannot replace the critical reasoning required for diagnosis and treatment in complex, uncertain clinical situations.

The current trajectory suggests that AI will develop into a ubiquitous first step in the patient journey. However, the “illusion” that a chatbot is a doctor remains a significant public health risk. The goal for the future is a hybrid model where AI improves health literacy, but the final, critical decisions remain in the hands of human professionals who are accountable for the patient’s life.

As AI continues to evolve, the next critical checkpoint will be the integration of these specialized health models into official clinical workflows and the subsequent validation of their accuracy in real-world medical settings.

Do you use AI to help manage your health or understand your medical reports? Share your experiences in the comments below and let us know if you’ve encountered contradictory advice from these tools.