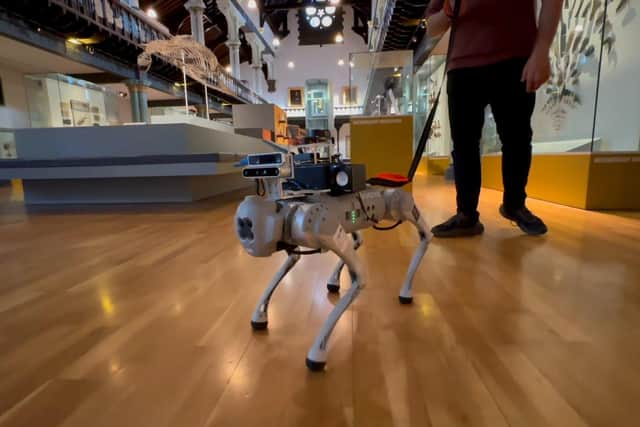

For decades, the bond between a visually impaired person and their guide dog has been one of silent trust and physical cues. While biological guide dogs are extraordinary allies, their communication is limited to a specialized set of commands and the physical tension of a leash. That boundary is now being dismantled by a team of researchers at Binghamton University, State University of New York, who have developed an AI-powered robot guide dog capable of real-time, two-way voice conversation.

This breakthrough transforms the role of a guide from a silent navigator into an interactive partner. By integrating large language models (LLMs), the research team has created a system that does more than just lead a user from point A to point B; it explains the world around them, discusses the route ahead, and responds to complex verbal inquiries in real time.

Led by Associate Professor Shiqi Zhang of the Thomas J. Watson College of Engineering and Applied Science’s School of Computing, the project represents a significant leap in assistive technology. The system moves beyond the binary nature of traditional robotic guidance—which often relies on simple sensors or physical tugs—and introduces a sophisticated layer of linguistic intelligence that provides users with unprecedented situational awareness.

The GPT-4 Edge: Moving Beyond Simple Commands

The core of this innovation lies in the integration of GPT-4, a powerful large language model that allows the robot to process and generate natural human language. In the world of traditional guide dogs, communication is limited; according to Professor Zhang, biological guide dogs can understand approximately 20 commands at most. While highly effective, this creates a ceiling for the amount of information that can be exchanged between the dog and the owner.

By utilizing GPT-4 with voice command integration, the robotic guide dog transcends these limits. It can engage in complex linguistic interactions, allowing users to ask specific questions about their environment or receive detailed instructions that a biological dog simply cannot articulate. This shift from “woofs to words” allows for a level of control and nuance previously unavailable in guide-dog systems.

This advancement is an evolution of the team’s previous work. Earlier iterations of their robotic guides focused on physical interaction, leading the visually impaired by responding to a tug on the leash. While functional, the new voice-enabled system provides a “spoken back-and-forth,” which the researchers argue is critical for increasing the user’s confidence and sense of autonomy during travel.

Mapping the World: Plan and Scene Verbalization

To make the robot truly useful for navigating complex environments, Professor Zhang’s team implemented two distinct communication frameworks: Plan Verbalization and Scene Verbalization.

Plan Verbalization occurs before the journey begins. The robot analyzes the destination and provides a verbal briefing of the possible routes and the estimated time required to reach the goal. This allows the user to understand the scope of their journey and make informed decisions before they even take their first step.

Scene Verbalization happens in real time during transit. As the robot moves, it continuously monitors the surroundings and provides the user with updates on their environment and the location of obstacles. For individuals with limited or no vision, this real-time feedback is essential for maintaining situational awareness, reducing the anxiety of navigating unfamiliar spaces, and ensuring overall safety.

The synergy of these two features ensures that the user is not just being pulled along a path, but is actively aware of the “why” and “how” of their movement through a physical space.

From the Lab to the Office: Testing User Autonomy

The practical application of the system was put to the test in a controlled yet complex environment. The research team recruited seven legally blind participants to navigate a large, multi-room office setting. This environment provided the necessary variables—such as doorways, furniture, and changing room layouts—to evaluate how effectively the robot could communicate obstacles and route changes.

The results highlighted the robot’s ability to provide critical feedback that traditional methods lack. By combining the physical guidance of a four-legged robot with the cognitive power of an LLM, the participants were able to navigate the office with a higher degree of situational awareness. The ability to receive verbal confirmation of their surroundings proved to be a vital component in enhancing the mobility and safety of the users.

Key Technical Takeaways

- Core Technology: Integration of GPT-4 for natural language processing and voice interaction.

- Communication Modes: “Plan Verbalization” for pre-trip routing and “Scene Verbalization” for real-time obstacle awareness.

- Comparative Advantage: Ability to handle complex verbal interactions far exceeding the ~20 commands understood by biological guide dogs.

- Validation: Tested with seven legally blind participants in a multi-room office environment.

- Academic Recognition: Presented at the 40th AAAI Artificial Intelligence Conference.

Academic Validation and Future Implications

The research, titled “From Woofs to Words: Towards Intelligent Robotic Guide Dogs with Verbal Communication,” was presented at the 40th AAAI Artificial Intelligence Conference. The presentation of this work at such a prestigious venue underscores the importance of combining robotics with generative AI to solve real-world accessibility challenges.

Professor Zhang has noted that this research demonstrates a version of a robotic guide dog that is, in specific linguistic aspects, more advanced than its biological counterparts. By equipping the robot with “very strong language capabilities,” the team has opened the door for future assistive devices that can act as both a physical guide and a cognitive interpreter of the physical world.

This development marks a shift in how we view assistive robotics. Rather than simply replacing a biological function, these systems are augmenting the human experience by providing a stream of information that was previously inaccessible. The integration of LLMs into mobility aids suggests a future where the visually impaired can navigate the world with a level of detail and confidence that mirrors sighted navigation.

As this technology continues to evolve, the next steps will likely involve refining the robot’s ability to handle more chaotic, outdoor environments and further optimizing the latency of the voice interactions to ensure that “Scene Verbalization” happens instantaneously as obstacles appear.

For those following the intersection of AI and accessibility, the official updates and further research findings from the Binghamton University research team will be the primary checkpoints for this technology’s progression toward commercial or widespread clinical use.

We invite our readers to share their thoughts on the integration of AI in accessibility. Do you believe robotic guides will eventually complement or replace biological guide dogs? Let us understand in the comments below.