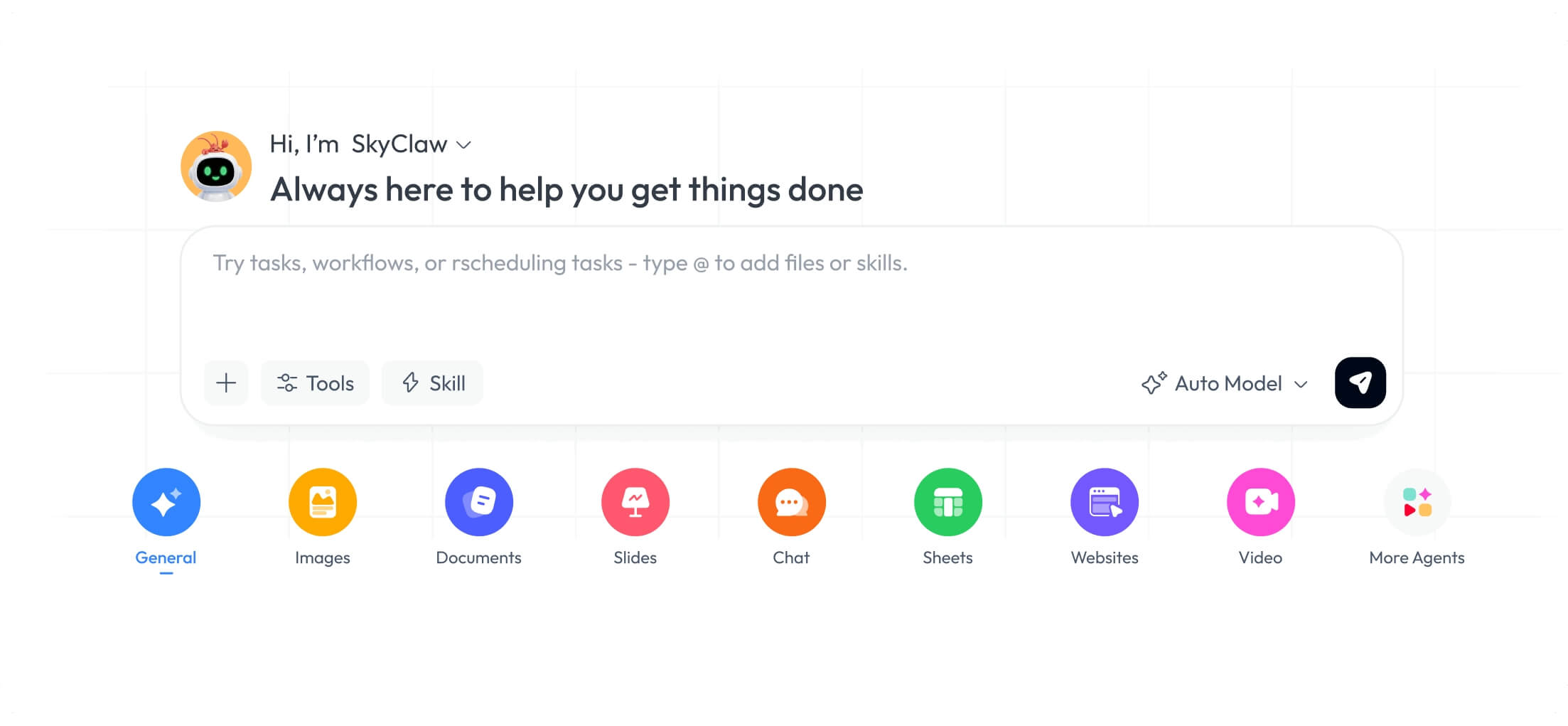

OpenClaw is gaining attention as a self-hosted personal AI assistant designed to run continuously on users’ own devices, offering a privacy-focused alternative to cloud-based AI tools. Unlike typical chatbots that operate only during interactions, OpenClaw functions as an autonomous agent capable of executing scheduled tasks, remembering context across sessions, and interacting directly with local systems and messaging platforms.

The platform emphasizes local-first architecture, meaning user data never leaves personal infrastructure, addressing growing concerns about AI privacy and data sovereignty. By running on hardware such as a Mac mini or Linux machine, OpenClaw enables millisecond response times without reliance on external APIs or third-party servers.

According to verified information from the project’s official GitHub repository, OpenClaw supports a wide range of communication channels including WhatsApp, Telegram, Discord, iMessage, Signal, Microsoft Teams, Matrix, and others. It can speak and listen on macOS, iOS, and Android, and render a live canvas interface that users control directly.

The OpenClaw Playbook, maintained by an autonomous agent running 24/7 on a Mac mini in San Francisco, provides a practical guide to setup and deployment. It covers installation via npm, workspace configuration, skill development, and cron automation for recurring tasks. The playbook stresses that OpenClaw is not a sandbox environment but a real autonomy framework where agents can execute commands, read and write files, and browse the web independently.

Core features highlighted in the documentation include persistent memory and context awareness, allowing the assistant to learn from user notes, health metrics, and personal goals over time. This enables deeply personalized insights that evolve with the user’s habits and preferences. The system also supports modular intelligence, letting users swap AI models or route tasks to specialized agents based on the provider they trust.

Security is built into the design through end-to-end encryption, local storage, and zero third-party dependencies. For users interested in collaborative automation, OpenClaw supports a “hive mind” model where multiple agents can coordinate tasks, share memory, and operate as a swarm—ideal for background monitoring or automated workflows.

The onboarding process is guided by the openclaw onboard command, which walks users through setting up the gateway daemon, configuring channels, and installing skills. It is compatible with Node 24 (recommended) or Node 22.16+ and works across macOS, Linux, and Windows via WSL2. The installation installs a launchd or systemd user service to ensure the assistant remains active even when not in direct leverage.

While the project does not currently list institutional partnerships or funding sources in its public documentation, its open-source nature allows for community-driven development and transparency. Users are encouraged to consult the official Getting Started guide and Vision document for long-term project direction.

As interest grows in self-hosted AI solutions that prioritize control and continuity, OpenClaw represents a notable option for developers and power users seeking to move beyond transient chatbot interactions toward persistent, agent-based computing.

For the latest updates, users can refer to the project’s GitHub repository or the OpenClaw Playbook, both of which are regularly maintained by the core team and contributors.

Have experience with self-hosted AI agents? Share your thoughts in the comments below, and experience free to pass this along to others exploring private, always-on AI alternatives.