The integration of artificial intelligence into primary care has long been promised as a way to streamline patient flow and provide instant guidance. However, a new report suggests that when the stakes are at their highest, the technology may not yet be ready for the responsibility of urgent care decision-making.

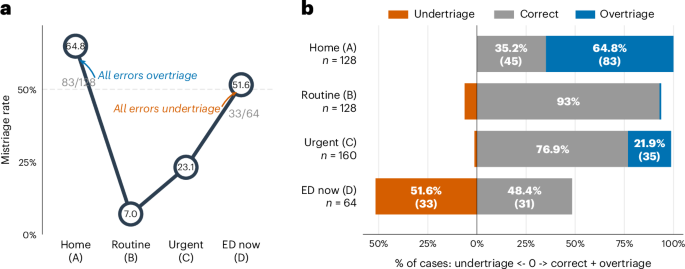

According to a study published online May 7, 2026, in Nature Medicine, the triage capabilities of ChatGPT Health exhibit significant reliability gaps at what researchers call “clinical extremes.” While the AI demonstrated high accuracy when dealing with moderately urgent conditions, it struggled significantly at both ends of the medical urgency spectrum.

The findings, associated with doi:10.1038/s41591-026-04427-1, reveal a concerning pattern: the system frequently undertriaged medical emergencies while simultaneously overtriaging mild cases. For a tool designed to help users determine whether they need a pharmacy, a clinic, or an emergency room, these errors represent a critical safety risk.

As a physician and journalist, I have seen the potential for AI to reduce the administrative burden on healthcare systems. But in medicine, the “margins” are where lives are saved or lost. When an AI fails to recognize a life-threatening emergency, the result is not a technical glitch—it is a potential patient catastrophe.

The Risk of the “Clinical Extreme”

In medical terms, triage is the process of determining the priority of patients’ treatments based on the severity of their condition. The goal is to ensure that those in critical condition receive immediate care while those with minor ailments are directed to appropriate, less intensive resources.

The Nature Medicine study highlights a dangerous inconsistency in how ChatGPT Health handles this process. The AI’s tendency to undertriage emergencies means that users experiencing acute, life-threatening symptoms may be advised to seek care at a later date or through a less urgent channel. This creates a “false sense of security” that could lead patients to delay critical interventions.

Conversely, the system’s tendency to overtriage mild cases creates a different, though equally systemic, problem. By directing patients with non-urgent issues toward emergency departments, AI tools risk exacerbating the existing strain on hospital resources, contributing to longer wait times for those who truly need urgent care.

The High Cost of Triage Errors

To understand why these “clinical extremes” are so problematic, one must look at the impact of a wrong decision in a triage setting. The difference between a “moderately urgent” case and an “emergency” is often a matter of minutes and specific, subtle symptoms that require nuanced clinical judgment.

Undertriaging an emergency—such as failing to recognize the signs of a myocardial infarction or a stroke—can lead to permanent organ damage or death. In these scenarios, the AI’s inability to trigger an immediate “go to the hospital” response is a failure of the most basic safety requirement of a medical tool.

Overtriaging, while less immediately fatal, has a cascading effect on public health. When emergency rooms are flooded with patients who could have been treated at a primary care clinic, the efficiency of the entire healthcare infrastructure drops. This “noise” in the system can obscure truly critical cases and lead to clinician burnout.

The World Health Organization has frequently emphasized the need for rigorous validation of digital health interventions to ensure they do not inadvertently harm patients or disrupt health systems. The gaps identified in ChatGPT Health’s triage accuracy underscore the necessity of these safeguards.

Balancing Innovation with Patient Safety

The allure of AI in healthcare is the promise of accessibility. For millions of people, a chatbot is the first point of contact when they feel unwell. However, this accessibility must not come at the cost of clinical safety.

The high accuracy for moderately urgent conditions suggests that the AI is capable of recognizing common patterns of illness. But medical emergencies are, by definition, deviations from the norm. They often present with “red flags” that require a level of skepticism and urgency that current large language models may struggle to maintain consistently.

For AI to become a reliable partner in urgent care decision-making, it must move beyond pattern recognition and toward a robust, safety-first framework. So implementing “hard rails”—rules that trigger an immediate emergency recommendation whenever specific high-risk keywords or symptom clusters are detected, regardless of the AI’s probabilistic confidence.

Until such safeguards are verified and standardized, AI health tools should be viewed as informational supplements rather than diagnostic or triage authorities. The human element—the ability of a trained nurse or doctor to sense the gravity of a patient’s condition through intuition and experience—remains irreplaceable.

The medical community now awaits further peer reviews and potential updates to the software to address these safety risks. As these tools evolve, the priority must remain the same: primum non nocere—first, do no harm.

We invite our readers to share their experiences with AI health tools in the comments below. How do you balance the convenience of AI with the need for professional medical oversight?